Apache Spark is established as a strong data processing engine for data workflows that are large or complex enough to benefit from distributed processing across multiple compute nodes. I’ve created this demo from a Spark instance I spun up effortlessly and free of charge in DataBricks community.

While RDD’s (Resilient Distributed Datasets) remain a foundation on which Spark logically operates, it is Spark 2.0’s more integrated DataFrames, similar to relational tables, that make programming Spark 2 progressively easier to perform. SparkSQL, obviously supporting SQL queries in Spark, is an enticing, maybe beguiling, capability, too. With that, and knowing that a gazillion SQL developers could potentially harness SparkSQL for processing large datasets, some interesting questions naturally arise.

Questions:

- For a novice-level SQL query, what does the equivalent PySpark DataFrame code look like?

- If Spark, including PySpark, has capabilities — for example: Linear Regression, Machine Learning, and GeoSpatial, all of which are outside the scope of this post — that are outside of what would normally be accomplished with a SQL query, or SQL-based ETL process, then does this justify learning PySpark DataFrame syntax up front instead of leaning heavily on SQL expertise?

Let’s address those questions with a use case, write the Spark code and explain our way through these two ways of querying Spark, then summarize our findings and note any takeaways. But, before I begin…

I wrote this code in Python 3.7 from a Jupyter Notebook in the databricks community cloud on a cluster I spun up to run Spark 2.4.5. More importantly, this code is the result of me completing a Spark DataFrame project exercise during Jose Portilla’s PySpark course on Udemy. Additionally, as a Spark noob, my goal with this post is to memorialize my personal exploratory path and learnings — as I have done in Python, NumPy, and Pandas — not to tout myself as an authority. I am an expert in BI/DW/SQL, and while I also have confidence in writing Python for data prep, Spark is a platform that I’m just dipping my toes into. With that, here we go…

Source Data and Code:

My underlying code and the source dataset for this exercise are both available in GitHub. Look for the line reading “PySpark DF Syntax and SparkSQL — Derived Fields – Group By – Aggregate and Sort” (.txt). For the data source, look a bit further down for the “walmart_stock.csv” file.

Simple Data Transformation Use Case:

From a dataset showing the DAILY opening price, high, low, closing price, and trading volume of Walmart stock over multiple years, what is the MONTHLY average closing price in descending order from most recent?

Steps:

Note: Date is provided, so first we will…

- Derive Year from Date, then…

- Derive Month

- Group By Year, Month

- Aggregate Close as a Monthly Average (mean)

- Output: Year, Month, Average Close (most recent at the top)

Equivalent ANSI-SQL query to return the solution from a relational table is:

SELECT Year(Date) AS Year, Month(Date) AS Month, AVG(Close)

FROM WalmartStockTbl

GROUP BY Year(Date), Month(Date)

ORDER BY Year DESC, Month DESC

While the aforementioned GitHub repository contains all code and source data, here are the essential screenshots and code highlights:

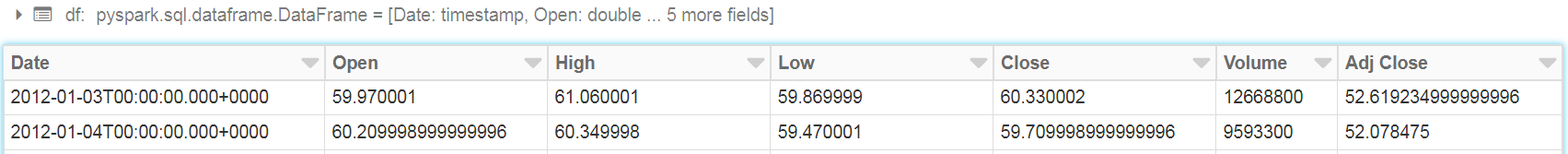

A Glimpse of the Source DataSet: Field names and top two rows

PySpark Code Highlights): See GitHub for session and library importing code

# To create initial dataframe from the source file…

df = spark.read.csv(‘/FileStore/tables//U_SDFB/walmart_stock.csv’,inferSchema=True,header=True)

# To derive year and month…

df_withYear = df.withColumn(‘Year’,year(df[‘Date’]))

df_wYrMo = df_withYear.withColumn(‘Month’,month(df[‘Date’]))

# To group by year, month, average Closing Price, and placing most recent month first.

df_AvClosByYrMo = df_wYrMo.groupBy(‘Year’,’Month’).agg({‘Close’:’mean’}).orderBy( df_wYrMo[‘Year’].desc(), df_wYrMo[‘Month’].desc() )

Spark Job Results: Top three rows

# Now, let’s do the same using SparkSQL:

# First, re-import the CSV file into another DataFrame in our Spark session

df_2 = spark.read.csv(‘/FileStore/tables//U_SDFB/walmart_stock.csv’,inferSchema=True,header=True)

# With this new dataframe, we will do some admin to create a Hive-like SQL view (neither materialized nor cached outside this session) in order to then write an ANSI-SQL query.

df_2.createOrReplaceTempView(“WalmartStockTbl_2”)

# With the prep done, here is our SQL Query that returns the same exact result.

spark.sql (

‘select Year(Date) as Year, Month(Date) as Month, avg(Close) from walmartstocktbl_2 group by year(Date), month(Date) order by Year(Date) desc, Month(Date) desc’

).show()

# Results: Exactly same as above screenshot with PySpark results

_____________________________________________________________________________________

With that, what have I demonstrated, what learned, and what takeaway impression made?

- Compared to Python Pandas DataFrames, PySpark DataFrames code looks more quirky to me. One example is PySpark’s bulky code for descending order.

- As with Pandas, breaking down the work into chunks, using successive DataFrames, makes PySpark coding…

- …very achievable by mortals with time to spend learning it.

- …until we (I) get comfortable writing complex one-liner PySpark code, we can easily reverse-engineer the above PySpark steps back into a one-liner in the same fashion as I demonstrated in my recent Pandas post.

- SQL in Spark: With my (and many others’) higher expertise in SQL than PySpark DataFrames syntax, it seems to be an enormous advantage for a SQL Pro to just embed complex SQL here. Having said that, I do not assume that SQL will accomplish anything close to all of the logic that we will likely want to run through Spark. Therefore, gaining comfort with PySpark syntax (or Scala, Java) is most likely foundational to becoming truly productive with Spark.

With that, our journey through modern data and analytics continues, soon taking us into Amazon Web Services.

Thank you for reading this post!

Although I have no specific goal to amass subscribers here, feel free to post a comment or a like. Thanks again.

One thought on “PySpark or SparkSQL for Data Wrangling”